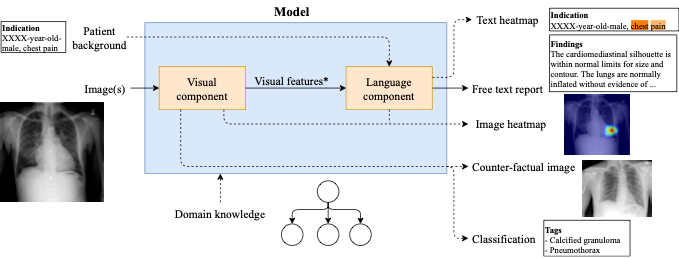

Artificial Intelligence (AI) has experienced rapid advances in recent years, bringing applications once seen as science fiction—such as autonomous vehicles, speech recognition, and automated language translation—into everyday use. In medicine, AI shows promise for supporting clinicians, particularly in radiology, where the volume of imaging studies far outstrips the capacity of specialists. Automated radiological report generation (RadRG) can alleviate this burden by producing draft findings from medical images; however, current deep‐learning approaches often act as “black boxes,” frequently relying more on learned language patterns than on the actual visual evidence in images, and they fall short of the accuracy required for clinical deployment.

State‐of‐the‐art RadRG models achieve strong scores on natural language fluency metrics (e.g., BLEU, ROUGE) but underperform in clinical correctness, in part due to limited large, expert‐annotated datasets and architectures that do not fully exploit visual features. Additionally, most research and available datasets focus on English reports, leaving a gap in resources and methods for other languages such as Spanish, despite demonstrated need and potential impact in Spanish‐speaking healthcare settings .

Goals

- Investigate representation‐learning techniques—including multitask learning of auxiliary vision tasks and multimodal learning via contrastive models (e.g., CLIP)—to enrich visual encoders and improve clinical accuracy of RadRG.

- Create and publicly release a large chest X‐ray dataset with Spanish language reports, and develop transfer‐learning and meta‐learning approaches to enable effective cross‐lingual RadRG from English to Spanish.

Methods

To achieve these goals, the project will:

- Design and evaluate multitask learning pipelines that jointly learn auxiliary tasks (e.g., abnormality detection, bounding‐box prediction) alongside report generation to strengthen visual feature learning.

- Fine‐tune and integrate multimodal contrastive models (such as CLIP) to further ground visual representations in language, exploring both pre‐training and attention‐supervision strategies.

- Develop a protocol for ethical data collection and annotation of Spanish‐language radiology reports, collaborate with clinical experts to build and validate this dataset, and apply transfer‐learning techniques to adapt English‐trained RadRG models to Spanish.

Expected Results and Impact

This research will deliver:

- Novel RadRG methods that leverage multitask and multimodal learning to achieve higher clinical correctness, validated through quantitative metrics and user studies with radiologists.

- A publicly available Spanish chest X‐ray report dataset, filling a critical gap for non‐English AI research in radiology.

- Cross‐lingual RadRG models enabling robust report generation in Spanish with minimal additional data, fostering broader adoption of AI‐assisted radiology globally.

- Open‐source code, software tools, and peer‐reviewed publications to disseminate methods and facilitate further advances at the intersection of AI, medical imaging, and natural language generation.